The NY Times, on May 30, 2023, published this article: A.I. Poses ‘Risk of Extinction,’ Industry Leaders Warn. It notes that AI industry leaders are rather concerned regarding the tools they have built and are selling may kill us all. Your fight or flight instantly engages when reading headlines like this - which, in the business of selling papers and advertising, is the the publisher’s intent. You are going to have a reaction. How certain can you be about how you feel and with respect to the risk posed?

Chatty Cathys

There is quite a bit of media predicting about ChatGPT and other LLMs these days, mostly saying that this 1968 film is going to happen unless we immediately institute major, regulatory intervention.

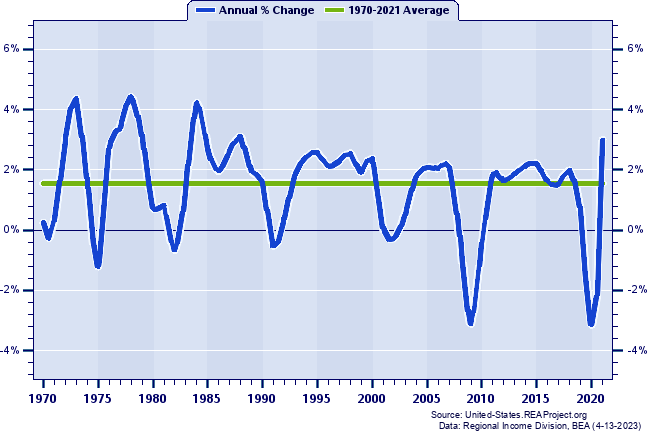

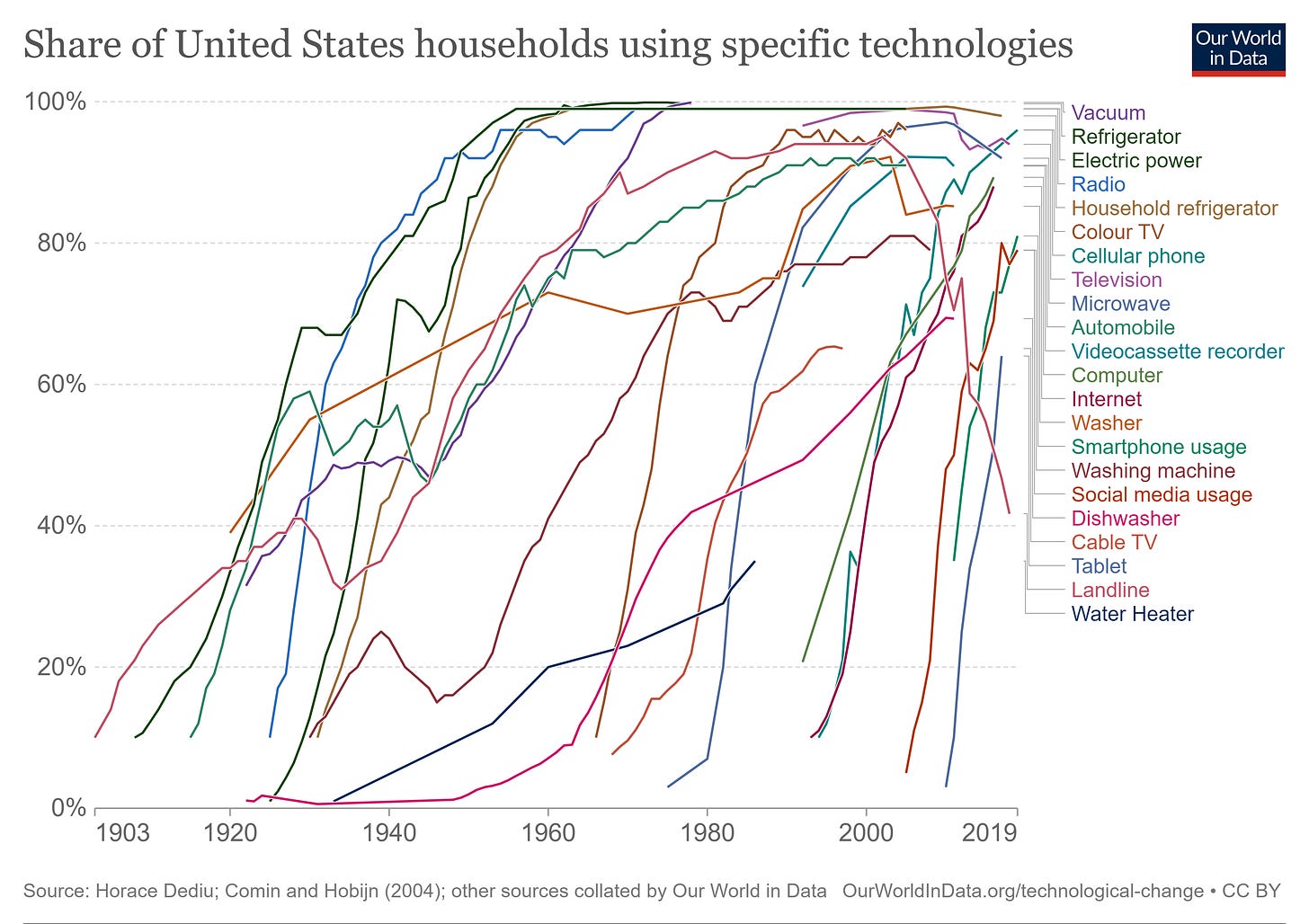

The creators of US atomic bomb technology had grave concerns. Not too long ago, there was much in the media regarding cell phone health impacts. The advent of personal computers in the 1980s was going to destroy our economy and put millions of people out of work. You can see below that this roughly 1980 event did not pan out as predicted. As is well-known (now - and there was lots of fear, then), the emergence of more powerful, more compact, Moore’s Law-driven computing horsepower had quite the opposite effect. Someone had to manufacture the chips, the hard drives (at first magnetic, now mostly solid-state), the machines themselves, keyboards, and monitors. Then there was the still-developing connectivity: networks, the Web, Wi-fi, Bluetooth. Much more importantly, this brought on the age of software and highly educated, complex, human-requiring jobs: software design, user interface design, and software engineering, project management, program management, API development, integration, testing, and on and on.

This last panel is clipped from the 2017 McKinsey paper: “Jobs Lost, Jobs Gained: Workforce Transitions In A Time Of Automation”, which can be found here.

The Long Conversation

I assert that the advent of the wheel, fire, steam, machine industrialization, telephone, electricity, and cell phones, naming just a few of the new technologies we’ve seen, all elicited the same panicked responses, “and still, it’s left undone”. If markets cannot be forecasted and times, why would we think technologies and their impacts can be? Our brains love to doomsday forecast. The twitchy, witchy brain strikes again. Is this time different? No way to tell. History says, “unlikely”.

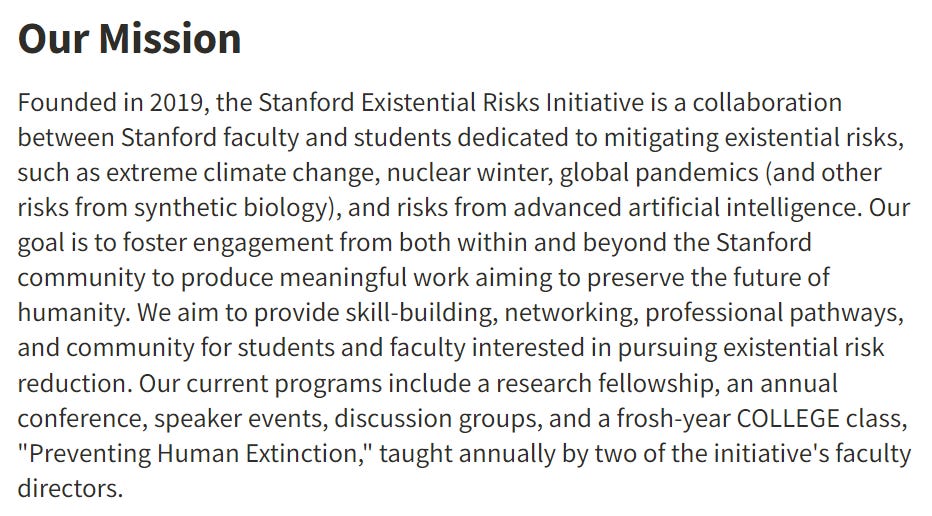

Stanford, and they are not the only one, has established an existential risks project.

Could any of these events result in human extinction? Of course they could. Perhaps it is the fear-driven conversation, panic, and rush to study that prevents this from happening. From that perspective, the action stimulated is a good thing. I am not saying that we should do nothing.

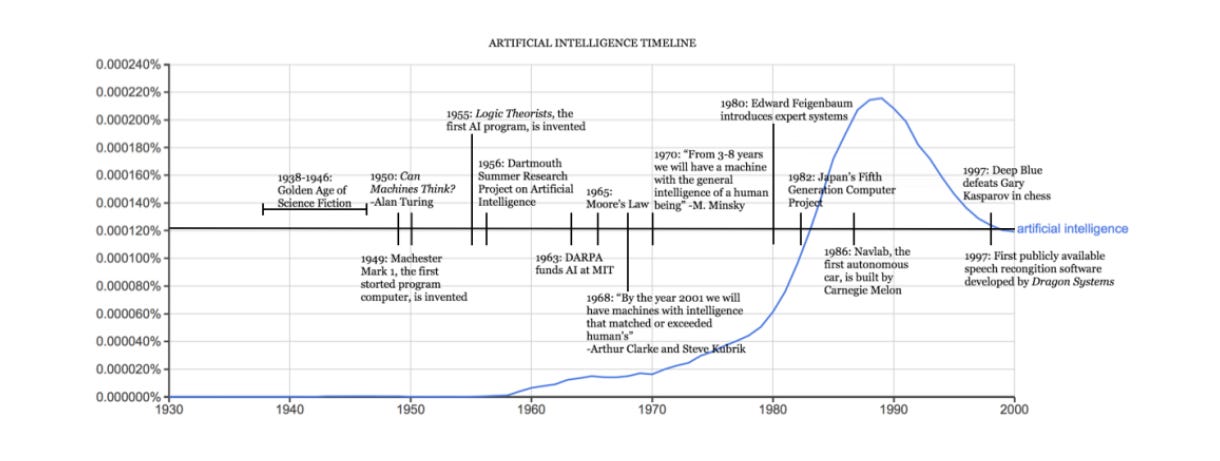

What I am saying is that panicked reaction, attempting to stop the emergence of AI, and excessive regulation neither stop progress nor help us. By the way, AI is not new . This illustration is from Rockwell Anyoha’s 2017 paper “History of Artificial Intelligence”.

Technological change creates jobs and always has. Are some jobs eliminated? Yes. More are created. With AI, someone has to create and organize the content that fuels LLMs, for example. The software will require extensive modifications. Industry-specific tools will be developed. Technological change can be quick, for certain.

In the case of AI, it has been much slower than predicted. Building this kind of software is extraordinarily difficult. It is awfully hard to mimic the human brain when we do not understand how the brain works. Well, we understand some of the physical aspects. But how you think, create, formulate plans? Not a chance. And as we see with all software, there are many types of errors that pop up and plenty of inaccurate responses from the various AI tools (which then require human intervention to fix). AI output has to be evaluated for reasonableness and, at least right now, fact-checked. As a result, AI development has been relatively slow.

In 1970 Marvin Minsky told Life Magazine, “from three to eight years we will have a machine with the general intelligence of an average human being.”

So, Are We Gonna Die?

All of these technologies have created new jobs. Jobs that require new knowledge and skills. Adaptability is the critical factor here, not fear of the new and unknown. Train your brain to harness the fear and flight mechanism. We have AI. The genie software is out of the box. If it is the HAL of our world, it is because we made it so. We can, and I postulate we will, make it not so. We persistently see disaster looming. This is the human condition and how we have survived so successfully. What we do not persistently see is disaster, at least long-lasting, human-race eliminating, unrecoverable disaster, occurring. With AI, jobs will evolve to make sure we train the machines well. We can use AI tools to rid ourselves of the mundane and enable more of what human thought can uniquely add. The AI machines can only spit out what the human brain has created. They have yet to exhibit the ability to create the way humans can. Let your twitchy brain gyrate. Then thoughtfully determine how you are going to use any emerging tech, AI or otherwise, to better do what you uniquely do.

Don’t move the way fear makes you move.”

—Rumi “

For a longer discussion of the current and potential future of AI, see Ben Horowitz “Why AI Will Save The World”.

Sundry:

Compounding, whether it is your saving and investment or the use of technology, takes time in the market, not timing the market.

Human nature is to run from the rustling sound, when in today’s world we need to, nearly every time, ignore the potential, existential explosion.

Another fear I hear all the time: “Hey Mark, what do you think is going to happen when the dollar is no longer the reserve currency?” Well, read this (excerpt below) and then come talk to me. Note: The debt ceiling, as I wrote two weeks ago, is not going to be an issue - here’s today’s WSJ article.

Today’s musical companion? The Pretenders, with a terrific message.